Voice Agents

Overview

Section titled “Overview”

Voice Agents let you build low-latency spoken interfaces on top of OpenAI speech-to-speech models. The SDK keeps the Realtime API mental model intact, but wraps the raw event flow in RealtimeAgent, RealtimeSession, and transport helpers that make tools, guardrails, handoffs, and session history easier to work with.

Under the hood, the same Realtime concepts from the official Realtime API with WebRTC, Realtime conversations, and voice activity detection guides still apply. The Voice Agents SDK adds a TypeScript-first layer on top of that API so you can stay focused on product logic instead of rebuilding transport and event handling from scratch.

Start here

Section titled “Start here”What the SDK adds

Section titled “What the SDK adds”- Browser-first WebRTC setup with ephemeral client tokens.

- Server-side WebSocket and SIP transport options.

- Automatic interruption handling and local conversation history updates.

- Multi-agent orchestration through realtime handoffs.

- Function tools, hosted MCP tools, approvals, and delegation patterns.

- Output guardrails and tracing support for live spoken interactions.

Pick the next page

Section titled “Pick the next page”| If you need to… | Go here |

|---|---|

| Connect a browser client safely with WebRTC and ephemeral tokens | Voice Agents Quickstart |

| Understand session lifecycle, VAD, interruptions, image input, tools, and history | Building Voice Agents |

| Decide between WebRTC, WebSocket, SIP, and custom transports | Realtime Transport Layer |

| Run a phone or telephony experience on Twilio | Realtime Agents on Twilio |

| Connect from Cloudflare Workers or other workerd runtimes | Realtime Agents on Cloudflare |

Why speech-to-speech

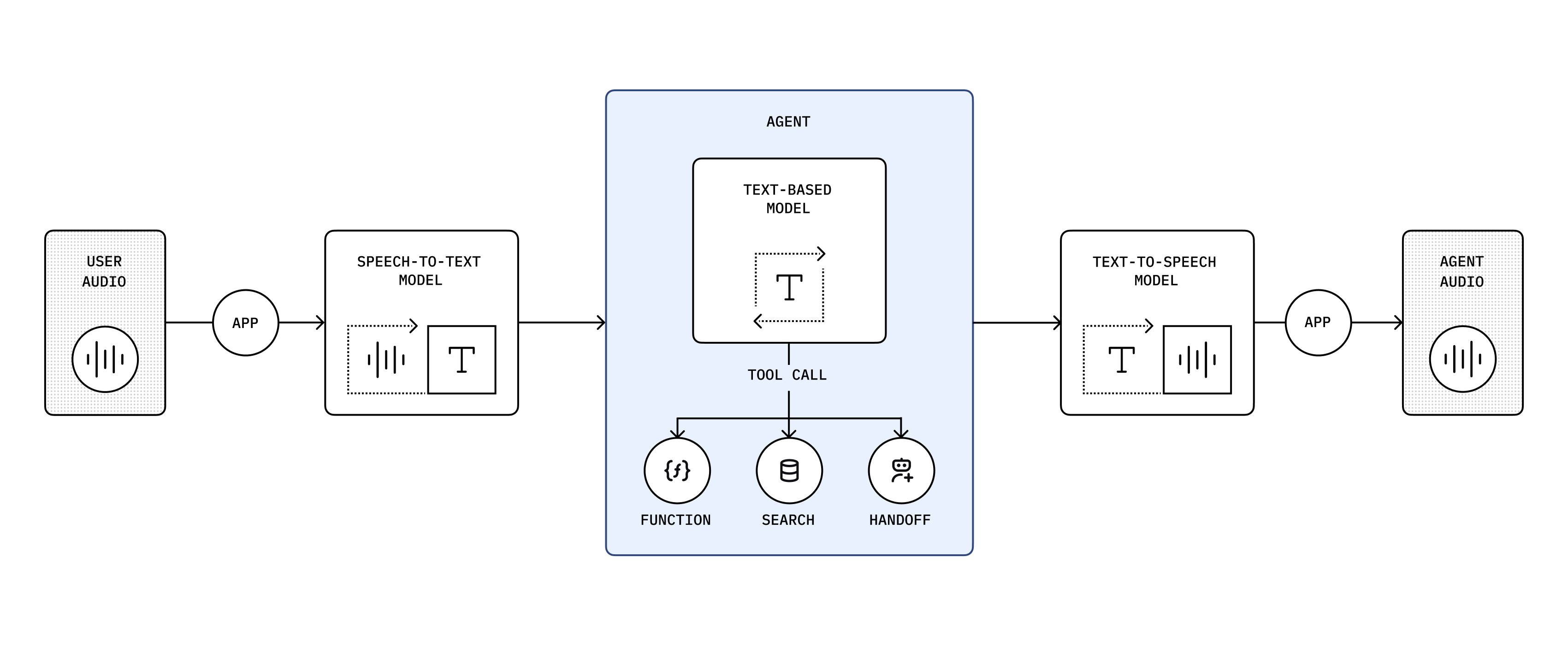

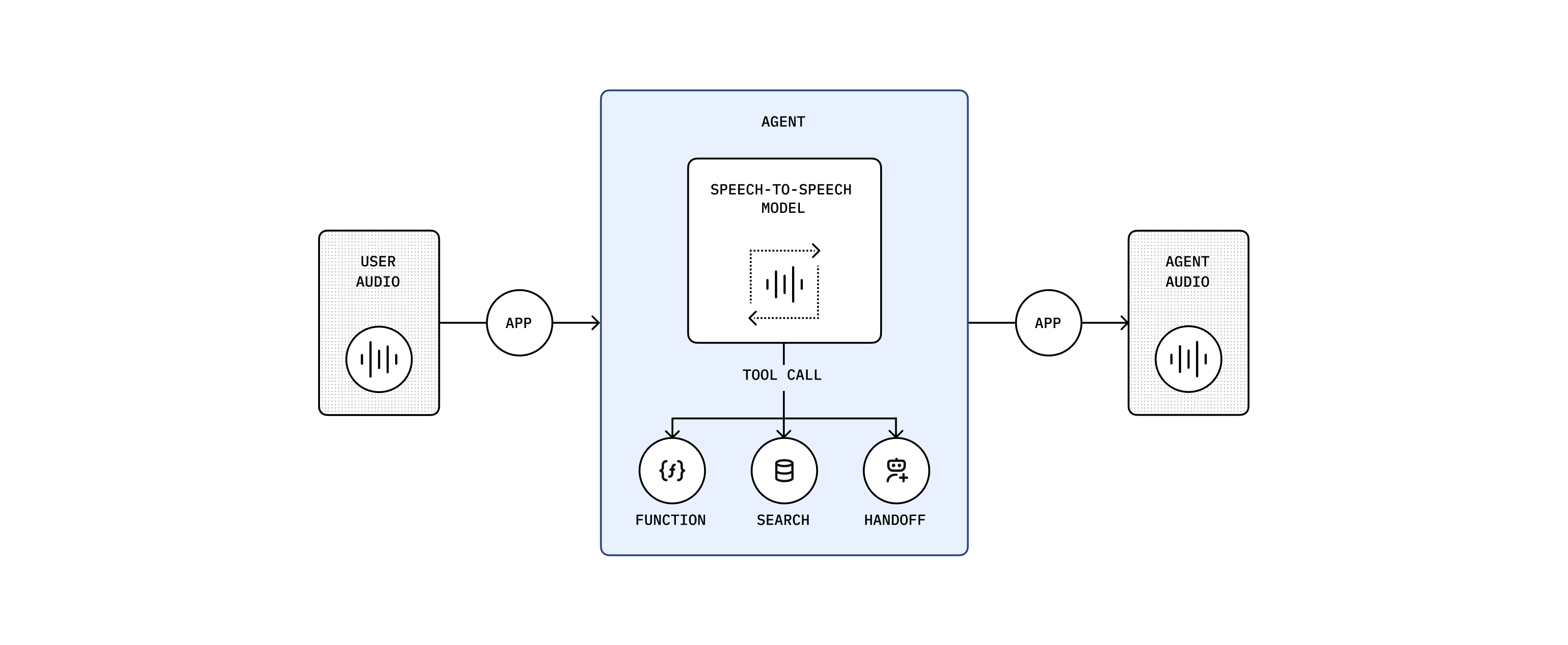

Section titled “Why speech-to-speech”Speech-to-speech models process user audio directly, so you do not have to build a separate speech-to-text, text reasoning, and text-to-speech chain for every turn. That keeps latency down and makes interruptions, mixed text and voice input, and tool calls feel much more natural in realtime applications.